Switched-fabric vendors race toward military electronics applications

In the past, systems designers waited for a clear winner to emerge from the newest generation of high-speed serial-switched fabrics. Yet as time goes on, these designers are realizing that one size may not fit all, and they are looking for different fabrics for different applications.

By Ben Ames

Switched-fabric interconnects are fast-serial-data pipelines designed not only to replace bus-based I/O architectures, but also to supply better performance, availability, and reliability.

As processors get faster and components get smaller, they demand increasing amounts of bandwidth and I/O to perform tasks in high-performance, embedded computing. Engineers say they agree that fabrics can deliver that speed, yet they debate which of the half-dozen main types of fabric will do it best.

Fabric vendors are jockeying for places on new electronics platforms, while designers of military and aerospace electronics are waiting on the sidelines, relying on the tried-and-true bus-based I/O architectures such as PCI and VME.

They know they will eventually have to choose a fabric; ultimately they will have no choice because of the pressing throughput demands of integrated processing, I/O, and memory on single-chip computers. Since microprocessors keep growing faster than the I/O buses that feed them data, most high-performance computers face a performance bottleneck that many believe only switched fabrics can solve effectively.

"People have the wrong impression about fabrics; there are not four or five, there are 62," says Ray Alderman, executive director of the VME International Trade Association (VITA) in Fountain Hills, Ariz. He posts a comprehensive list of fabrics online at www.vita.com/vso/fabrics.html. "Of course, many are proprietary," Alderman explains. "The basic five are InfiniBand, Ethernet, StarFabric, RapidIO, and PCI Express."

VITA exists to support the VME backplane, so Alderman says he is wary of the new standards, calling them error-prone and untested. He also discounts the analogy of a horse race to determine a clear winner. Rather, the fabric technologies are so different that each will find a little bit of market share.

Alderman says the fabrics fall into three philosophies of multiprocessing: tightly coupled, snugly coupled, and loosely coupled.

A tightly coupled system shares all resources (such as stacks and memory) between its processors. RapidIO is the best example, Alderman says. This type of system is the fastest, which is crucial for applications such as fire control, which demand guaranteed fast real-time processing.

A snugly coupled system shares some resources between processors. Infiniband, StarFabric, and PCI Express use this method; their processors share memory, thus spending some bandwidth as overhead. The relatively slow speed of snugly coupled multiprocessing is appropriate for applications in signal processing or refreshing monitor screens.

A loosely coupled system shares no resources between its processors. Ethernet uses this approach and incurs a relatively large protocol overhead compared to any other fabric. A loosely coupled system is appropriate for applications that can handle latency, such as medical imaging and telecommunications.

Even with those qualifications, however, fabric vendors are promising more than they can deliver, Alderman claims.

"There's less known about these fabrics than is being said about them," he says. "You need millions of hours of run time to be able to predict and correct the aberrations. When you get to high frequencies, above 3.5 GHz, the gods of physics have a tremendous sense of humor. There's evidence of a direct relationship between temperature and bit-error rate, and between humidity and bit-error rate. Until that research is done and certified, I won't be satisfied."

Until fabrics are exhaustively tested, military contractors will not use them for high-end platforms, Alderman says.

"The initial implementation of fabrics will be for low-end, non-mission-critical jobs moving big blocks of data where they can live with the latency. Military designers will use switched Ethernet or StarFabric or InfiniBand to handle communications or to move some files, but not for any real-time applications," he says.

"And those implementations will be simple add-ons to VME, as VXS/VITA41. All the big stuff will continue to be done on the backplane in hard real time."

Ethernet

Designers look at more than raw speed when they shop around for a switched-fabric network, says Frank Phelan, principal design engineer at Synergy Microsystems in San Diego, Calif. They also value endurance.

"I'm seeing a trend of the military pushing contractors to pick standards that will last longer. Because otherwise, they spend more money on software and services for less ubiquitous standards," he says. That favors Ethernet, which Phelan says is the oldest of all the major switched fabrics.

"The world has accepted it as a standard, and military planners like that interoperability with older platforms," he says.

Basic Ethernet is too slow for the newest platforms, but engineers will soon get comfortable with the upgraded versions — Gigabit Ethernet and 10-Gigabit Ethernet, experts say. By comparison, options like Fibre Channel and InfiniBand might be just as fast, but they do not have Ethernet's momentum.

"Yes, there's a protocol overhead with Ethernet, but it is still just sending bits down a wire. I don't see anything that can't be dealt with in software," Phelan says. "The speed of all the different options is just a function of physics — wires, switches, cables, fiber, and chips."

Another trend in military design is the effort to reduce wire count, by using serial connections instead of a parallel bus architecture.

As a result, PCI Express will be another winner for three reasons, Phelan says: it offers a relatively low pin count, it is familiar to engineers accustomed to PCI, and it is backed by industry giant Intel.

The driving force among systems designers involved in this market is the conflict between the growing complexity of electronics and the relatively slow growth of processing power.

"We're stuck with how fast processors increase their performance," Phelan says. "According to Moore's Law, all we can do to increase performance is wait 18 months, or hook a bunch of them together with either I/O or shared memory."

Systems that use shared memory avoid I/O traffic by keeping a common memory visible on both ends of a network. Examples include Mercury Raceway, StarFabric, and PCI Express Advanced Switching. That is a fundamentally different approach from Ethernet, which shares data by sending a series of I/O packets.

The competition between switched fabrics will not produce one clear winner, like a horse race. Instead, the winning standards will have the largest market share, while losers will be relegated to niche applications.

"My guess is that in five years, they'll all still be there, but not in the mainstream," Phelan says. "They're all based on the same physics, sending data over the same twisted wires. So it really doesn't matter which one you pick, or whether you're getting 2.5, or 5, or 10 gigabits per second. It all boils down to a software issue — how do you use your data and guarantee bandwidth over the link?"

Fitting fabrics on boards

The dominant fabric may be chosen as much by marketing as by technology, says Bill Schuh, military products manager at avionics circuit-board designer Condor Engineering in Albuquerque, N.M.

"It depends who comes to the plate and how big they are," he says. "If Intel pushes a PCI chip, or if Motorola puts RapidIO on all its chips, that will make a difference. It's the old Betamax versus VHS thing again."

In the meantime, designers continue to use Ethernet in new platforms, since they are familiar with its classic technology. This momentum might slow in future years, as complex electronics demand higher performance. But changing fabrics is not a simple operation, Schuh warns.

"Look at the processor overhead of Ethernet; if you use it for real-time applications, you have to make changes, and suddenly it ain't so cheap anymore. Or if you use RapidIO to handle latency, then you need to program your communications chip," he says.

"Customers look at Fibre Channel and think they can simply throw it in. That's OK for telecom, but for military command and control with low latency, they suddenly realize there's no bus scheduling, no link lists, and there are secondary protocols under the data. Now they have to dedicate a PowerPC chip to do communications."

So designers must choose fabrics based not only on raw speed, but also adaptability.

"There's a lot of programming to be done. You'd really better understand the ins and outs or you'll be digging yourself into a hole. That's the untold story here," Schuh says.

RapidIO

A common belief is that systems designers are trying to pick a winner, but in truth, there will be many survivors of the fabric wars, says Sam Fuller, president of the RapidIO Trade Association in Austin, Texas. Each interconnect has its own strength, so different technologies are solving different problems in the marketplace. Options such as InfiniBand and HyperTransport will always excel at certain applications.

"Where InfiniBand is successful today and will clearly survive is supercomputers, where it competes against Myrinet," says Craig Lund, chief technical officer of Mercury Computer Systems in Chelmsford, Mass., and a founding member of the association. "InfiniBand is a big, massive thing with lots of functionality. It won't ever go away, but the question is its high price point."

HyperTransport acts much like a processor bus, with some applications in high-performance computing, Fuller says. It will continue to serve those applications, but will have no traction in the embedded market; since its interface is not serial, it will not go across a backplane, Lund says.

RapidIO also serves a niche, and will struggle for market share until it is available in silicon. "A lot of people wonder why they've heard so much about RapidIO but have not seen much on the market yet," Lund says. "In the applications we're targeting, designers have to go through a prototyping stage first to test new technologies. We've been there already in FPGA to build confidence among users. So actual production relies on the market."

Two recent developments will accelerate the process, he says: RapidIO won ISO certification in December 2003, and developers released streaming fabric extensions for applications in DSLAMS (digital subscriber line access multiplexers) and high-end routers.

"A lot of people have the idea that Parallel RapidIO was the first generation, wiped out by Serial RapidIO," says Lund. "That's not true; they're different protocols over the same electricals. There's less latency in parallel, since it doesn't have to serialize and deserialize on each end. Whereas the control signal is embedded in serial and it will actually travel a longer distance."

Like the other competing standards, there's room for several approaches in the market, Fuller says.

"We completed the parallel spec three years ago, so there are now products based on that in the market. And we completed the serial spec two years ago, so there are a number of programs now in development. We expect to announce them in the second half of this year."

Infiniband

"Sky chose a couple of years ago to put its money on one horse in the switched-fabric area; InfiniBand," says Mark Pacelle, vice president of marketing for Sky Computers in Chelmsford, Mass.

Company leaders like the network because it offers high bandwidth and low latency, resiliency to failed nodes, self-healing properties, and low overhead on the processor. Sky officials recently launched InfiniBand into the market with the SMARTpac 600, a front-end, data-acquisition server, and the SMARTpac 1200, a compute server for signal processing and image analysis.

"This is the first instantiation of InfiniBand in the high-performance embedded computing space, and it's scalable, particularly compared with other switched fabrics. It will work with chassis, racks, or systems," Pacelle says.

Researchers at the Virginia Institute of Technology in Blacksburg, Va., demonstrated that scalability in September when they used InfiniBand to link 1,100 Apple G5 Power Mac computers together. The resulting Terascale Cluster supercomputer is one of the world's largest, researchers say.

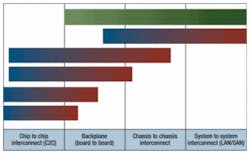

When engineers compare switched fabrics, their options generally split into two camps, says Steve Paavola, chief technical officer for Sky Computers. If they need a chip-to-chip interconnect, they look at RapidIO, PCIX, PCI Express, HyperTransport, and StarFabric. If they need a high-availability, secure system interconnect, they'll look at InfiniBand and Ethernet.

"The problem with Gigabit Ethernet is that it demands significant CPU cycles, and with 10-Gigabit Ethernet something has to give. One of the reasons we went with InfiniBand is that it has almost no load on the processor," Paavola says.

On the chip-to-chip side, Serial RapidIO looks good on paper, but is still not available in silicon. HyperTransport, meanwhile, has been around a long time; it offers excellent performance, is widely deployed in applications like home entertainment systems, but never caught on for military applications.

When PCI Express begins shipping next month, it could win many converts, thanks to a powerful push from Intel, Paavola says. It's not a multiprocessor-switched fabric, like InfiniBand, so they won't compete directly. But the pending PCI Express Advanced Switching will be much closer, like a bus-to-bus architecture, he says.

Another new InfiniBand product is the dual-port 10-gigabit-per-second PMC InfiniBand host-channel-adapter PMC card from SBS Technologies Inc. in Albuquerque, N.M. It is designed to push the high throughput and low CPU utilization required for 10-gigabit-per-second InfiniBand data transport for embedded applications and high-performance computer clusters.

The 4X InfiniBand protocol supports four 2.5-gigabit-per-second dual-simplex connections, called "lanes," for an effective duplex transmission speed of 10 gigabits per second. Designers can double or triple those lanes to create 8x (20 gigabit-per-second) or 12x (30 second-per-second) InfiniBand.

"The only thing that generates that much data is memory, so switched-fabric blades would use a 12x cable. But right now, 4x would push any computer out there to its limits," says Steve Cook, InfiniBand technologist at SBS.

Designers might be able to use the IB4X-PMC-2 primarily for specialty applications to pump bulk video or other big bandwidth channels, he says. Military applications might include simulation, video transfer, or redundant backups, since each PMC acts as a dual port.

"The RapidIO people say they're the best, but they don't have any product out," says Lori Dunbar, InfiniBand engineering manager at SBS. "The thing about InfiniBand is that a lot of the technology and features aren't new. So there's a lot of reliability built in; that's where it shines. It's nonproprietary, open-source technology that can be used for military applications."

StarFabric

Military electronics engineers are struggling to find ways to increase I/O performance, increase bandwidth to the processors, and handle multiprocessor systems, says Herman Paraison, product manager at StarGen in Marlboro, Mass. They face a challenge: as I/O and processor performance increase, the interconnect is the bottleneck. As a result, they are migrating away from bus-based connections, and toward switched interconnect technologies.

StarGen's solution is the StarFabric switched PCI interconnect product line, used today in military command and control, semiconductor test, communication routers and switches, storage equipment, medical imaging, and video servers.

The company sells two solutions, both running at 2 gigabits per second. The relatively old approach is a StarFabric-to-PCI bridge that can handle a PCI expansion to several buses in the same backplane, or in or several backplanes (within or between boxes).

"The legacy StarFabric product is key for customers who need a fast time-to-market, and who don't want to pay as much for software as they would with InfiniBand," Paraison says.

The path routing approach isolates different parts of the system, and enhances processor-to-processor communication. This system scales beyond one blade or processor, whether creating a small cluster or DSP farm, or handles image processing that needs dedicated processing resources.

Path routing is best for the customer building a multiprocessor system, like a blade server. It also enables redundancy with several fabrics, to increase high availability on both I/O and processing.

StarGen designers will offer an improved version using 10-gigabit-per-second Advanced Switching in the first half of 2005. Advanced Switching is the first standard switched interconnect that will help converge computer and communications systems. It is a mezzanine-level interconnect for inside-the-box processor-to-processor or processor-to-I/O communication.

Alternatively, engineers could choose Ethernet. But StarFabric is faster than 10/100 Ethernet, and is less expensive than Gigabit Ethernet, he says. The company's Advanced Switching product will reach the market before 10-Gigabit Ethernet.

Likewise, designers have been using InfiniBand more for large servers and clusters than competing with StarFabric, he says. Serial RapidIO is still in development, so designers must settle for Parallel RapidIO today, he says.

"So the momentum is behind Advanced Switching and PCI Express, and it will be a challenge for RapidIO to get a foothold in that space again," he says.

Many customers agree. BAE Systems uses StarFabric in the electronic warfare functions of the F-35 Joint Strike Fighter as a communications structure between processing elements and hardware-based functional elements.

In other applications, SeaChange International Inc. of Maynard, Mass., uses it to move data within a video server, processing digital cable television or image processing in military space. SVA Networks uses the StarFabric 2010 PCI Bridge for a redundant control plane in its 10k-8 router.

Fabric flies on Joint Strike Fighter

The increasing amount of data flowing between every battlefield platform — from soldiers and sensors to unmanned aerial vehicles (UAVs) — is driving demand for switched-fabric networks.

Digital-signal-processing (DSP) boards are the classic example of a product that needs such a high-performance interconnect to send and receive bulk data. Two years ago, engineers at Dy 4 Systems in Kanata, Ontario, chose StarFabric as their fabric of the future for DSP interconnect, and began shipping products by summer of 2002, says Ian Stalker, manager of marketing communications.

They chose StarFabric because it fit well with the VME backplane, a ubiquitous component in military and aerospace platforms. The company also builds a PMC carrier card that uses StarFabric, so the PMC can function as a node in switched fabric, while keeping the I/O flow separate from the processing, Stalker says. They also offer Fibre Channel connections between boards and processors, but that technology offers a weaker balance of cost versus capability, Stalker says.

And in January the company launched the CHAMP-FX, a 6U VME form-factor board running a pair of Xilinx Virtex-II Pro FPGAs as its core engines, and various I/O links including two Starfabric bridges and a VITA 42 XMC Switched Mezzanine–compliant specification.

Engineers at Dy 4 prefer StarFabric because of its simplicity; it does not demand the headroom of a complex protocol, even when it handles heavy data flow such as FLIR, radar, or sonar signals, says Rob Hoyecki, Dy 4's product marketing manager for DSP, in Leesburg, Va.

In comparison, Gigabit Ethernet could also handle that bandwidth, but only at the cost of taxing the processor with its protocol, he says. Likewise, InfiniBand works well between boxes or between shelves, but incurs higher latency through its software protocol. In contrast, RapidIO and PCI Express perform well with both low latency and low overhead.

The approach must be working, because planners at BAE Systems Information & Electronic Warfare Systems (IEWS) in Nashua, N.H., announced in February they would use Dy 4 to supply a high-performance, rugged computing module for the F-35 Joint Strike Fighter. The new module will be based on the CHAMP-AV II Quad PowerPC 7410 digital signal processor, with integrated StarFabric switched interconnect technology. The F-35 has already adopted StarFabric as its interconnect.

The fabric is a good match for high-reliability platforms like avionics or torpedoes because it runs with low latency, redundant paths, degraded mode survivability, priority interrupt, and multitasking, says Joe Jacob, a Dy 4 technical product manager. In fact, many modern platforms demand reliable, high-bandwidth connections. So the emerging VITA 46 standard is crucial to ensure that connection, Stalker says.

A designer can achieve signal rates well into the low gigahertz whether he chooses PCI Express, Serial RapidIO, or 10 Gigabit Ethernet, he says. VITA 46 will ensure a switched fabric computer format to handle that data flow much better than existing VME connectors. And most important it's flexible, so it can handle legacy VME boards, and also handle whichever switched fabric rises to the top of the heap.

That is one reason that Dy 4 planners are predicting a segmented market, as opposed to a clear winner among the switched fabric technologies. For instance, RapidIO is a strong technology but it may not win, because vendors still haven't produced any silicon, says Jacobs. InfiniBand is a fine box-to-box interconnect, but it can't do everything. And PCIX could gain market share this year simply because of its availability in silicon.

Two questions for choosing a fabric

The most important point in choosing a switched fabric technology is to find the one that is best for a specific task. All the different switched-fabric technologies do not necessarily belong in the same basket, says Bernard Pelon, marketing director at CSP Inc. in Billerica, Mass.

An engineer should ask two questions to narrow his choices; what is the physical layer? And what are the protocol and stack assumptions? The future of switched fabrics is in serial connections, he says. Parallel fabrics demand too many pins and wires, and can't cover long distances. The best fabric will also use a duplex connection, not one-way, so the user can detect broken links. The fabric should not use shared memory, since that architecture discourages scaling and is hard to debug, he says.

Following all those rules, engineers at CSPI apply their Myrinet switched fabric most often to applications in embedded clusters and supercomputers. In fact, Myrinet is the fabric on 193 of the world's top 500 supercomputers, Pelon says. Company designers choose open-architecture APIs to ensure portability, and choose the Linux operating system for its strength in containing failed nodes.

Myrinet is also a component in the upgrade program for the E-2C Hawkeye airborne warning and control plane, he says. Applications in military platforms demand another important feature of switched fabrics; they must be flexible.

Fabrics have a longer lifespan than most other technologies, such as processors that must be replaced every 12 months. And as long as five years can pass between when a contractor chooses components and when they finally deploy a platform. So the ideal switched fabric will work with a wide variety of other technologies.

Myrinet is best suited to clusters. InfiniBand is fast, but demands a complex and expensive stack of protocols, he says. RapidIO has a simpler protocol stack, but doesn't offer a full array of attributes. "If you're making a simple application, then RapidIO may be just right," Pelon says. "If you need I/O, then PCI or PCIX is going to be beautiful for it."

In the long run, PCI Express and Serial RapidIO will be dominant players in the market, he says.

Fabrics and VME

"Everyone agrees we need switched fabrics," says Richard Jaenicke, director of product marketing at Mercury Computer Systems in Chelmsford, Mass. "The first attempt was VITA 41, which was an improvement from VITA 64x. Now, we've agreed that VITA 46 will be the next generation of VME."

Backed by leaders of Dy 4 Systems, Radstone Technology in Towcester, England, and Vista Controls in Santa Clarita, Calif., planners at Mercury announced their intention in January to back VITA 46 as the best new specification.

"The goal of the VITA 46 standard is to provide high-speed signals for both serial-switch fabrics and high-speed I/O, as well as legacy VME or PCI protocols, for the spectrum of commercial and military systems," the group's press release says.

The change will not happen overnight. This new standard supports the two legacy, parallel buses, so designers of military platforms will likely upgrade their systems slowly, evolving from existing electronics to hybrid architectures, before running purely switched fabric systems.

The new standard has to satisfy many requirements, Jaenicke says: boost bandwidth, maintain backward compatibility, and still use conduction cooling via its side rails, to agree with the bulk of existing military installations. It also had to shrink the size from 6U to 3U, and maintain at least 2.5 gigabits per second, with room to grow.

"If you're going to call in all your boats and planes to replace their backplanes, you want the new solution to last at least eight or 10 years," he says. VITA 46 can scale to six or even 10 gigabits per second.

"We see VITA 46 as a platform for convergence," Jaenicke says. "It addresses both 3U and 6U, military and commercial, air and conduction cooled, and VME and PCI. That combination doesn't exist on any other next-generation platform."

Backward compatibility

The scramble to find an optimal fabric faces one serious hurdle: backward compatibility. Most existing military platforms run on a VME backplane, so Pentagon planners would be loathe to sacrifice their investments in knowledge and technology by forcing a wholesale change.

That is the reason that switched fabrics with existing card formats have a significant advantage, says Justin Moll, director of marketing at Bustronic, in Fremont, Calif. VXS, the new VITA41 standard, is a fabric-agnostic architecture that could support nearly any of the new networks. Since it's backward compatible with existing VME boards, it will probably be very popular when it is first available on products in March, he says.

Likewise, the VITA 41.1 standard supports Infiniband, and the VITA 41.2 standard will support Serial RapidIO. Engineers using Compact PCI boards can choose between the PICMG 2.16 standard, supporting Ethernet, and the PICMG 2.17 standard, supporting StarFabric.