Trusted boot: a key strategy for ensuring the trustworthiness of an embedded computing system

Trusted boot -- a key strategy for ensuring that the trustworthiness of an embedded computing system -- begins with the very first software instruction at system startup to protect against cyber attacks.

Trust in an embedded module or system means ensuring that the system operates exactly as intended. In the context of the boot process, trust means that an embedded module executes only the boot code, operating system, and application code. The only way to guarantee trust in this chain is to ensure that all code -- from the very first instruction that a processor executes -- is authentic and specifically intended by the system integrator to execute on that processor.

Establishing initial trust in the boot process involves various means to do that, although many of these same techniques are also useful for extending trust to the operating system and application code.

Cryptography in the form of encryption and digital signatures is an essential component for establishing trust and preventing a malicious actor from modifying, adding, or replacing authentic code. While encryption ensures confidentiality to prevent prying eyes from understanding the code, it does not guarantee that the code comes from an authorized source and has not been tampered with in some way.

Protections to ensure software authentication typically require the use of public-key cryptography to create a digital signature for the software. This involves the use of a protected private key to generate the signature, and a public key stored on the module. It does an attacker no good to have the public key since he also needs the private key to generate a valid digital signature.

Trusted boot by itself does not make a secure system. There are attacks that can bypass authentication, particularly if the attacker has physical access to the module. There are other ways to gain access to the information an attacker wants. For example, the attacker may simply wait until the module is powered up and then observe the application code while it is running.

These techniques for bypassing authentication do not diminish the usefulness of trusted boot as a component in a defense-in-depth approach in which different security technologies work together to provide a far more secure system than any one technology could deliver on its own.

In the embedded computing world, Intel, Power Architecture, and Arm are the dominant processor architectures, and each one has technologies that support a trusted-boot process. In addition, trusted boot can be designed using external hardware cryptographic engines and key storage devices. The remainder of this article will survey available commercial options for providing trusted boot.

Trusted eXecution Technology (TXT) and Boot Guard are two Intel technologies that create a trusted boot process. Both make use of an external device called a trusted platform module (TPM) -- a dedicated security coprocessor that provides cryptographic services.

Related: Trusted computing: it's not just cyber security anymore

TXT makes use of authenticated code modules (ACM) from Intel. An ACM matches to a specific chipset and authenticates with a public signing key that is hard-coded in the chipset. During boot, the BIOS ACM measures (performs a cryptographic hash) various BIOS components and stores them in the TPM. A separate secure initialization (SINIT) ACM does the same for the operating system. Each time a module boots, TXT measures the boot code and determines if any changes have been made. A user-defined launch control policy (LCP) enables the user to fail the boot process or continue to boot as a non-trusted system.

Boot Guard is a newer Intel technology that works in a complementary fashion to TXT. It introduces an initial boot block (IBB) that executes prior to the BIOS and ensures that the BIOS is trusted before allowing a boot to occur. The OEM signs the IBB and programs the public signing key into one-time programmable fuses that are stored in the processor. On power-up the processor verifies the authenticity of the IBB, and then the IBB verifies the BIOS prior to allowing it to execute. Intel describes Boot Guard as “hardware based boot integrity protection that prevents unauthorized software and malware takeover of boot blocks critical to a system’s function.”

Many of the Power Architecture and Arm processors from NXP support the Trust Architecture, and the two different NXP processors use variations of the same Trust Architecture. They implement trusted boot differently than Intel. For Power Architecture and Arm processors, the trusted boot process requires the application, including the boot code, to be signed using an RSA private signature key. The digital signature and public key is appended to the image and written to nonvolatile memory. A hash of the public key is programmed into one-time programmable fuses in the processor.

When the processor boots, it begins executing from internal secure boot code (ISBC), stored in non-volatile memory inside the processor. The ISBC authenticates the public key using the stored hash value. The public key then authenticates the signature for the externally stored boot and application code. The external code may contain its own validation data similar to the ISBC. This process can break the external code into many smaller chunks that are validated separately by the previous code chunk. In this way the Trust Architecture can extend to subsequent software modules to establish a chain of trust.

NXP QorIQ processors, meanwhile, enable encryption of portions of the image to prevent attackers from stealing the image from flash memory, and allow for alternate images to add resiliency to the secure boot sequence.

Intel and NXP provide mechanisms to implement trusted boot and ensure the authenticity of the boot process starting from the very first line of code. Still, these important steps do not complete the trusted boot effort.

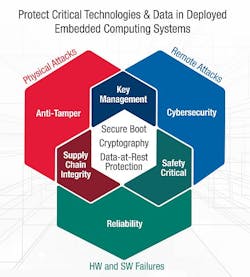

Designers of secure systems must follow up these with security mechanisms to ensure that trusted boot is maintained throughout the rest of the boot process. Designers also must identify and mitigate potential attack vectors, especially in embedded systems that might be open to issues of supply chain integrity, physical tampering, and remote cyber attack.

Steve Edwards is technical fellow at the Curtiss-Wright Corp. Defense Solutions Division in Ashburn, Va. His email address is [email protected]. More information on Curtiss-Wright’s Trusted COTS (TCOTS) program for protecting critical technologies and data in deployed embedded computing systems is online at www.curtisswrightds.com/technologies/trusted-computing.

Ready to make a purchase? Search the Military & Aerospace Electronics Buyer's Guide for companies, new products, press releases, and videos