Faster and faster I/O

In their quest for increased I/O speeds in high-performance computing systems, military system designers have settled primarily on two standards–Ethernet and RapidIO.

By John McHale

The U.S. military becomes a more network-centric force each year, relying more and more on the quality of the data it receives from sensors on land, at sea, and in the air. Its systems require high bandwidth combined with low latency to move the data quickly from chip to chip, box to box, and system to system.

In embedded computing designs this is accomplished through high-speed fabrics; the two clear choices among system designers are Ethernet—1-gigabit and 10-gigabit variants—and Serial RapidIO.

One Gigabit Ethernet and 10 Gigabit Ethernet are the main choices for box to box and system to system due to their speed, while Serial RapidIO has solidified a niche in intensive signal-processing applications like radar and sonar.

In business terms it is really a matter of convergence, says Nauman Arshad, senior product marketing manager for switching, security, and MCOTS at Curtiss-Wright Controls Embedded Computing in Leesburg, Va. “Having two or more different fabrics in one system can be expensive,” he adds.

Eventually the community will converge on Ethernet as one solution, except in cluster computing situations such as digital signal processing (DSP) where RapidIO will still be the best solution, Arshad continues. Ethernet can work at that level but not as efficiently as RapidIO, which has lower latency, he notes.

“Despite how cool switched fabric technology is, most customers at the DSP level are not using switched fabrics within signal processing systems,” says Jeff Milrod, president and chief executive officer of Bittware in Concord, N.H. RapidIO makes sense for this level, Milrod says.

“Computational overhead chews up processors,” he explains. “For every megabit of data over Gigabit Ethernet or any Ethernet it requires a megahertz of processing performance.” It can cause a system to really get bogged down when running something such as protocol stacks, Milrod adds.

“I see RapidIO gaining more ground” in terms of economies of scale, says Rob Kraft, vice president of marketing at AdvancedIO Systems in Vancouver, British Columbia. It is still the best technical solution for high-end signal processing, while Ethernet can be used for passing on the high-speed data from the box. This is for network-centric, command and control applications, he explains.

There is a lot of market attention in the military embedded community for switched fabrics, especially RapidIO, says Michael Stern, AXIS multiprocessing at GE Fanuc Intelligent Platforms in Towcester, England. GE Fanuc’s CRX800 is a VXS-compliant 22-port Serial RapidIO switch card, designed to provide support for the company’s DSP220 VXS quad 8641 processor. It has four Serial RapidIO payload ports, four Serial RapidIO inter-switch ports, and a PowerPC MPC8548 management processor. Its Tundra Serial RapidIO implementation enables operation speeds as fast as 3.125 GHz per lane.

Meanwhile PCI Express is still appropriate for point-to-point processing. On the front end, meanwhile, the front panel data port (FPDP) standard continues to be adapted, Kraft continues.

Military designs with Gigabit Ethernet are being deployed, and 10 Gigabit Ethernet products are right around the corner and promise even more growth, Kraft says. He notes that more ruggedization requirements are also coming in from his military customers, who are looking for more value than typical commercial products provide. Arshad sees 10 Gigabit Ethernet also playing a major role in networking on the move applications and the adaptation of Internet Protocol version 6 (IPv6). It will require “huge uploads of video” data over IP, and 10 Gigabit Ethernet has the bandwidth to transmit that data, he adds. Curtiss-Wright will also provide network processor board and routers for these applications, Arshad notes.

Eventually it will be a matter of packaging, Arshad says. DSPs and field programmable gate arrays (FPGAs) will be together in one chassis followed with a spread of serial links, he says. The data processor will be using RapidIO and the data sent out of the box switch cluster on 10 Gigabit Ethernet.

Curtiss-Wright has a 6U VPX Gigabit Ethernet multilayer switch/router board—the VPX6-684 FireBlade II, that can use 12, 20, or 24 Gigabit Ethernet ports and as many as four 10 Gigabit Ethernet ports. The company also announced that its Gigabit Ethernet Switch Module (GESM) will also be used in the development and demonstration phase of the U.S. Army’s Future Combat Systems (FCS) Integrated Computer System (ICS) program.

Dave Barker, vice president of marketing at VMETRO in Houston says that 10 Gigabit Ethernet is getting a lot of attention in large storage applications. The data comes in at high speeds and “we record it and process it,” he explains. The company has partnered with AdvancedIO on the storage side for its 10 Gigabit Ethernet products. He adds that he has not seen much demand for Serial RapidIO in these types of applications.

Blending Ethernet RapidIO

Engineers at Mercury Computer Systems Inc. in Chelmsford, Mass., and AdvancedIO have combined Ethernet and RapidIO in one system. “We are creating a bridge between the two worlds”—RapidIO inside the box and 10 Gigabit Ethernet outside the box, says Kraft. This is one way for VXS users to have a commercial off-the-shelf (COTS) system that brings the high speeds of 10 Gigabit Ethernet and capabilities that RapidIO brings to board-to-board situations, says Tom Roberts, product marketing manager at Mercury.

Basically Mercury is transferring data via 10 Gigabit Ethernet from outside the box to the Serial RapidIO fabric, Roberts says. Then once the compute nodes in the chassis do their computations, the data is sent out of the system on the same “10-gig highway,” he explains. There are a bunch of sensors pumping data into RapidIO via a 10 Gigabit Ethernet connection and the data only goes through RapidIO into several computer nodes and then back out the same way to put it out on the “10-gig highway,” Roberts explains.

“By deploying the Gateway in VXS, developers can implement one, platform-wide Ethernet network that streams sensor data to C4I (command, control, communications, computers, and intelligence) applications,” says Ian Dunn, chief technologist for advanced computing solutions at Mercury.

The SR-110 is based on the Freescale MPC8548 processor and includes a Power Architecture core, serial RapidIO, Gigabit Ethernet controllers, and an integrated DDR2 memory interface. The device supports gateway and bridging modes with MultiCore Plus software.

“People use RapidIO if they want determinism” at the processor level, but there is too much software overhead with 10 Gigabit Ethernet at that level, Roberts says. “Multi-seconds matter in signal processing.” For example, synthetic aperture radar applications have better latency using RapidIO between processor nodes, but can get the data out on 10 Gigabit Ethernet, he says.

Moving sensor data

“That is what we’re seeing—more and more desire to share data between sensors,” says Roberts.

Roberts points to a white paper written by his colleague, Dunn, to illustrate his point. The paper, titled “Converged Sensor Networking: Bringing IP Networking to the Tactical Edge,” states that “defense application developers” need to develop a digital signal processing architecture to adapt IPv6 for sensor applications in surveillance and targeting platforms.

Dunn calls it converged sensor networking architecture (CSNA). He states the key to making this happen is in cluster computing—“computers integrated in a network through hardware and software to behave as one computer.” In the paper, he states this can be accomplished in VXS-based systems through various implementations of switch fabrics, such as RapidIO and Ethernet. Dunn prefers VXS (VITA standard 42) because it “adds a high-speed serial fabric capability, while maintaining VME64 compatibility.

“A VXS chassis can house existing 6U signal processing assets, while hosting advanced packet-based payloads. Redundant systems can be realized with separate data and control planes,” Dunn states. “10 Gigabit Ethernet remains external to the CSNE, while ultra-efficient RapidIO is applied within the system. In redundant RapidIO-base CSNE, dual 10 Gigabit Ethernet interconnects the CSNE system to IP nodes via a Layer-2/3backbone, or directly to 10 Gigabit Ethernet-enabled sensor feeds. The 10 Gigabit Ethernet-to-RapidIO gateways translate between the two protocols and the entire Power Architecture processing heart of the CSNE subsystem. The failure of a gateway or switch does not disable the system, because failover can occur to the redundant modules.”

Dunn concluded by predicting that the plethora of sensors deployed by future and current defense systems will need CSNE to link “multiple sensors and users together effectively.” The network will also require “networking and exploitation capabilities to collaborate among sensors on the collection of target data that directly addresses the warfighter’s request,” he writes.

Roberts says that one major defense prime contractor has already put the company’s CSNE products to use, but declined to comment further due to contractual obligations.

Engineers at Critical IO in Irvine, Calif., have also introduced products to improve bandwidth between sensors, says Jack Staub, president and chief executive officer of Critical IO. For applications such as lidar and radar, Gigabit Ethernet and 10 Gigabit Ethernet create an Ethernet network that links IO devices with processors, storage devices, etc. He calls it the Gigabit Ethernet Sensor Network.

“We believe Ethernet is a great way to connect sensors and processors,” says Erich Fischer, director of business development at Critical IO. For one, it is everywhere in use and can be easily migrated from 1-gigabit speeds to 10-gigabit speeds. This enables management of sensor data on one network, Fischer notes.

The Critical IO product is actually called the Sensor Link module, and links all the data from IO devices—such as analog to digital converters, Fischer says. The bidirectional Sensor Link converts sensor data streams to/from standard UDP or TCP Ethernet data at rates as fast as 1,200 megabytes per second sustained.

Not every application needs the monster bandwidth of 10 Gigabit Ethernet, which is where Gigabit Ethernet comes in, Staub says. It is already being fielded in military systems, while 10 Gigabit Ethernet is still a couple years away from being mass deployed. “Ethernet will have a long life and is the obvious winner in the long-term,” Staub says.

Critical IO also still develops its XGE Silicon Stack TCP/IP Offload Engine (TOE) PCI mezzanine cards (PMCs). The devices use a silicon stack that implements TCP/IP offload in silicon, providing low host-CPU loading, and deterministic operation. Multiprocessing on Ethernet allows reconfigurability on the fly and data sharing in real time, which are essential in future tactical sensor applications, Fischer says.

FPGAs and IO

While 10 Gigabit Ethernet has not reached critical mass, there is still no guarantee that it will be around 10 years from now, Milrod says. Nor is Serial RapidIO guaranteed to last, he adds. This is where FPGAs come in. They offer re-use possibilities through their IP cores, he says.

Using FPGAs from Altera in San Jose, Calif., Bittware engineers are building an internal methodology for this purpose to support their customers, Milrod says. Milrod elaborates that “to include FPGAs as part of the signal processing chain, board architectures must also include an FPGA framework.” He and his company call their methodology the Atlantis framework.

Atlantis provides “validated board-level interfaces for I/O, communications, and memory; an internal dataflow interconnect fabric that allows the Atlantis modules to be easily connected; and a control fabric that allows them to be easily coordinated and controlled,” he continues. Milrod says the result is a signal processing platform that is stable, letting the designer “focus on application development rather than reinventing board-level infrastructure.

“I don’t know that we’re doing anything new,” Milrod continues. Other companies have FPGA libraries, but what Bittware is doing is creating a standard framework that is open to its customers. “It’s not re-inventing the wheel, it’s re-using IP” and is relevant to IO issues at a level “we can control,” Milrod says. “It is a business model to use FPGAs” to bring value directly to Bittware’s customers.

Milrod expects it to be ready by the end of this year. BittWare’s GT-6U-VME VXS board uses FPGA, DSP technology, and the Atlantis framework for applications requiring flexibility and adaptability along with high-end signal processing, Milrod says.

The board features two high-density Altera Stratix II GX FPGAs, two clusters of two ADSP-TS201S TigerSHARC processors from Analog Devices, a front-panel interface supplying four channels of high-speed SerDes transceivers, and an extensive back-panel interface including VXS. Simultaneous on- and off-board data transfers can be achieved at a rate of 5 gigabytes per second via two BittWare Atlantis frameworks implemented in the Altera Stratix II GX FPGAs.

While Bittware uses Altera FPGAs, many in the military embedded community use FPGAs from Xilinx, also located in San Jose, Calif. Xilinx FPGAs have serial links called RocketIO, which really enhance IO speeds in a system, says Mark Littlefield, RapidIO product marketing manager for FPGA and signal acquisition and synthesis products. RocketIO is an internal Xilinx term, he adds.

Xilinx has products based on three different switched fabrics: Aurora, PCI Express, and Serial RapidIO. Memory integration levels are also much higher with FPGAs, Littlefield adds. “Our customers like FPGAs because of their flexibility and scaleability,” GE Fanuc’s Stern says.

For signal processing and sensor applications, FPGAs with Serial RapidIO cores are an excellent choice, VMETRO’s Barker says. The FPGA acts as a bridge between the FPGA and PC world.

For the high-bandwidth low-latency applications, VMETRO’s FPE650 quad FPGA processor uses the Xilinx Virtex-5 and takes advantage of the card’s network of RocketIO links and parallel connections to foster high-speed IO, Barker says. The serial and parallel data links to the backplane, as well as FMC (VITA 57) mezzanine sites for direct I/O to the FPGAs, succeed without introducing data bottlenecks.

There are dedicated high-speed links that connect to the front-panel FMC sites and VPX backplane connections. The FPE650 is designed for DSP applications such as electronic countermeasures, signal intelligence, and electro-optics.

Board standards, such as VXS and VPX, and fabrics, such as Ethernet and RapidIO, are what customers are looking for, Barker says. Yes, someone can buy a base line COTS IO board and come up with his own form factor, but with standards it is much easier, he continues.

This is why reuse within FPGAs is such an advantage for long-term business strategies, Barker says. FPGAs and their IP cores based on RapidIO and other fabrics will help fight obsolescence in analog and digital IO applications, he explains. Going forward, VPX standard products will really lead the charge for switched fabrics, Barker says. They will also need to support several fabrics, as the market will evolve.

VITA Standards

The VITA standards organization in Fountain Hills, Ariz., continues to develop standards for integrating the different switched fabrics into VXS and VPX (VITA 46) computing products.

“AdvancedIO proposed and is leading the definition of the VITA 42.6 standard for running 10 Gigabit Ethernet over the XMC connectors,” AdvancedIO’s Kraft says. “This effort will lead to a common standard to satisfy the demand for 10 Gigabit Ethernet offload and connectivity in ruggedized applications where front-panel access is not possible.”

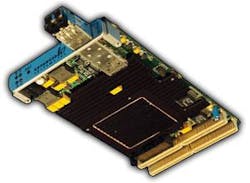

AdvancedIO’s V1120 product is designed to meet VITA 42.6, Kraft adds. The V1120 is a 10 Gigabit Ethernet module that is also conduction-cooled for extended temperature. It also provides host connectivity through PCI Express and has as many as two channels of 10 Gigabit Ethernet connectivity over the standard XMC Pn6 connector. It is targeted at radar and sonar applications.

For VPX, VITA 46.7 will address 10 Gigabit Ethernet issues on the board-to-board level, Curtiss-Wright’s Arshad comments.

Bittware is also committed to a long-term VPX roadmap, Milrod says. “The roadmap mirrors our line of hybrid—FPGA and DSP—signal processing products, which combine Analog Device’s TS201 with Altera’s high-density FPGAs. Also included in the roadmap are 3U and 6U VPX products based on the Altera Stratix IV.”

Milrod says he thought VXS was efficient bandwidth for most any applications and that VPX might not necessarily be needed, but that is where the market is headed.

null