Power control and thermal management at the platform level

Demands are increasing for high-performance embedded computing in small packages, which creates a huge amount of waste heat. Designers look at sharing responsibilities for electronics cooling from chips to entire systems.

Aerospace and defense systems designers today face an overwhelming task when it comes to electric power control and thermal management. Systems integrators are demanding increased capabilities and ever-smaller packing, and yet these converging forces are conspiring to create staggering amounts of waste heat; there's no end in sight to this trend.

On a subsystem level, controlling power and managing waste heat is relatively straightforward. Embedded computing and electronics subsystem designers today look at making components and board products as small and as power-efficient as possible, and remove waste heat with conduction, convection, or liquid cooling.

Conduction cooling moves heat away from hot components like data and graphics processors by channeling heat along card edges or through heat pipes to cooler surrounding surfaces. Conduction cooling uses fans to blow heat away from hot components. Liquid cooling, meanwhile, uses a system of pumps and plumbing to move heat away from the electronic components that produce it.

These approaches often work just fine within an electronics box, but the real sticky problems come at the so-called platform level - on an aircraft, ground vehicle, surface ship, or submarine. The core issue is where does the heat go when it moves away from chips, boards, and electronics boxes?

Waste heat from electronics boxes has to go somewhere, and places to dump heat on the platform are decreasing in number as technology advances. Moreover, the number of electronics subsystems producing waste heat on modern military platforms is growing, which compounds the problem - a growing amount of heat, and fewer places to dump it.

"Just to take the heat away from electronic components is just the first step," says Gerald Janicki, vice president of thermal systems at Meggitt Defense Systems Inc. in Irvine, Calif. The box-level electronics designers "are smart enough to get the heat from the board to something that will conduct the heat, and then their job is done. You really have to have a global and local optimization approach. You can't just cool at the component, board, or box level. Nothing has gotten cooler; everything has gotten hotter."

Where to get rid of the heat

Consider an aircraft. One possibility for dumping heat is into the ambient air. At altitudes where temperatures are cold, that can be the best solution, but what about when the aircraft is idling on a desert runway with its electronics on? In those cases, using just ambient air as a primary heat sink isn't adequate.

One place where systems engineers can consider dumping waste heat is liquid, and on aircraft that means fuel or hydraulic fluid. While aircraft fuel systems still provide abundant opportunity to move heat away from electronics, using hydraulic fluid to absorb heat just isn't what it used to be. Modern avionics designs are making many hydraulic systems a thing of the past. In fact, the option of using aircraft hydraulic fluid for heat management is shrinking or going away entirely.

Modern aircraft are being designed as all-electric, or at least "more-electric." That means using electric power to move control surfaces, brakes, and other mechanical systems rather than hydraulics. While this might be an advantage for improving overall platform maintenance, it can be a nightmare for the thermal engineer. Not only do electric aircraft have fewer places to dump waste heat, but the subsystems that are converting from hydraulics to electric power now are generating electric waste heat that wasn't there before.

"Aircraft today are very electric, and rely less and less on engine power and more and more on secondarily generated power," points out Meggitt's Janicki. "Active cooling needs to dump the heat into the fuel. That's fine until you start running low on fuel. Good thermal management is very benign; nobody cares about it until something breaks down. It can ruin your whole mission."

An aircraft fuel system also is a viable option for dumping waste heat, and this practice is common. Still, what happens when the aircraft starts to run low on fuel? Not only is the aircraft's range severely cut back, but its ability to cool electronics also is diminished. Could a tactical aircraft running low on fuel also have to compromise the capability or range of its sensors to avoid overheating its electronics?

Platform-level considerations

"It's a very serious system problem," says Michael Humphrey, key account manager for business development and global support at the Parker Hannifin Corp. Aerospace Group in Alexandria, Va. "All systems like this need to look hard at the capacity of the system, and what if you are putting a thermal on your fuel system that might impact your range or effectiveness."

Some industry sources suggest that the Lockheed Martin F-35 Joint Strike Fighter is facing just this issue as platform designers scramble to balance the ability to manage waste heat with the aircraft's onboard, high-performance computing, sensors, high-speed data networking, and other electronic subsystems.

"The F-22 and F-35 are moving to more-electric aircraft, generating a lot of waste heat. What are you going to do with that?" asks Jim McCormick, product line director for solid-state power controllers at Data Device Corp. (DDC) in Bohemia, N.Y.

It can be awkward to discuss electronics thermal management on a platform level. Electronic subsystems designers continue to develop innovative box-level cooling techniques to handle modern processors like the Intel Xeon D and a variety of general-purpose graphics processing units (GPGPUs). As long as they can cool a box, their job is done.

Platform-level systems integrators, meanwhile, have so many design priorities to worry about that system-wide thermal management rarely comes up. "We have been talking about this system-level thermal management for a long time, and it's falling on deaf ears," laments Parker's Humphrey.

Experts are urging subsystems designers to think more broadly about thermal-management solutions that can benefit the entire platform, rather than just the electronics box. "We shouldn't waste our time on technologies that don't really affect the overall system," says Meggitt's Janicki. "You have to understand how these smaller technologies like passive cooling affect the whole system. There are a lot of good solutions out there, but scaling them up is another matter."

Electronic subsystems designers and platform integrators shouldn't just think about how to cool boards and boxes; they should consider early in the design process the environment in which a platform will operate and the opportunities that environment offers for cooling. "It's really not even just about what the platform is; it's about where the platform is, and what is the place you have to get rid of the heat into," says Parker's Humphrey.

"We've never really coupled power and cooling together, and think we have to," adds Meggitt's Janicki.

Thermal management and power efficiency

One of the most straightforward approaches to managing waste heat in an electronics system or on a platform is to do everything possible to improve the efficiency of electronic components and subsystems so they function with high performance, yet generate a minimum of waste heat.

"We are now working on power density - or how little real estate you can allow, vs. how much space do you have allocated in the platform for power switching," says DDC's McCormick. "We do some custom ASIC [application-specific integrated circuit] designs to get some advantages, reduce board space, add more heat sinking, and have a more compact design."

Another approach to enhancing power efficiency involves so-called "smart power," or using microprocessors instead of electro-mechanical circuit breakers to route and control electric power. "We have seen a huge conversion from electro-mechanical circuit breakers to solid-state power controllers [SSPCs]," McCormick says.

Inserting digital control of power also offers new ways to look at power management on a system and platform level. "People have been looking at intelligent load management," McCormick says. "If I only have so much power available, how do I manage this load from a power-management and power-dissipation perspective. We could think segregating non-essential things like commercial aircraft galleys, and then power-up the galleys when there's less load on the entire system."

Parker Hannifin offers a onboard controller that manages thermal control in a chassis. In this case thermal management involves not only removing waste heat from electronics, but also providing heat for powering-up electronics in cold environments.

"Using COTS [commercial off-the-shelf] or modified COTS, a lot of those circuit cards in embedded systems can go down to zero or minus 40 degrees Celsius," says Jason Dundas, engineering manager in the Parker Hannifin Gas Turbine Fuel Systems Division in Mentor, Ohio. "We can manage all that: the power quality with system-level monitoring, intermediate voltage rails, and backplane voltages. Monitoring gives us the ability to send messages to a customer's payload that make decisions based on that information."

Parker thermal-management experts also are working on concepts involving two-phase cooling to enhance heat removal in small packages. Two-phase cooling involves liquid brought to the boiling point that cools with the liquid itself, as well as with the latent vaporization of the boiling liquid.

"Think about boiling water on your stove top," Dundas says. "Heated water stays at that same temperature, even though you continue to apply heat energy. You can acquire heat while keeping your substrate at a relatively isothermal temperature, which is very good and promotes efficient heat transfer."

The trick is to transport the cooling liquid and steam to a condenser. "Until that heat condenses, you still use that vaporization to reject that same amount of heat," Dundas says. This process can enable users to maintain temperatures while transferring vast amounts of heat. "You end up with something that has a much smaller volume of liquid for coolant," says Parker's Humphrey. "then you can think about creative ways to save weight."

Cooling hot custom boards

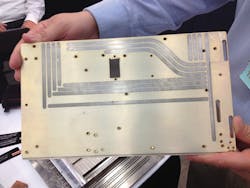

Parker experts are capitalizing on their company's expertise in liquid cooling to create a thin metal cold plate containing a small amount of liquid to cool custom-designed high-performance circuit boards for radar, electronic warfare (EW), signals intelligence (SIGINT), and other demanding applications.

"We want to get the heat-acquiring substance, liquid, as close as possible to the heat source so we can pick up the heat," Dundas says. "It allows such nice integration of your cooling with your electronics." Some of these specialized boards, custom-designed by the prime contractors, can generate as much as 200 watts of waste heat per board. "Sensors are driving thermal densities up, and heat pipes can't transfer the heat fast enough."

If power-control and thermal- management trends continue as they have been, a growing number of embedded computing designers will be forced into using liquid cooling, simply because conduction and convection cooling will be inadequate to handle such intense thermal loads, Parker's Humphrey says.

"At the board level, it is still a COTS market, but the box level and sensor level are very application-specific," Humphrey says. Applications like that most likely will require custom and application-specific cooling solutions for now, but COTS high-performance embedded computing designers are likely to become involved in liquid cooling as well. "It will evolve down to the COTS box guys," he says. "These guys will get pulled into the liquid-cooled world kicking and fighting."

Revolutionary designs in power efficiency for high-performance embedded computing

There are two ways to control potentially damaging heat from high-performance embedded computing systems: move the heat away with conduction, convection, or liquid cooling, or create vast enhancements in the power efficiency of digital processors to reduce the amount of heat they generate.

It's the latter option, enhancing power efficiency, that drives a U.S. military research program that seeks to create enabling technologies leading to the design of high-performance processors with power efficiencies of 75 billion floating point operations per second with a single watt of electricity.

Seventy-five gigaflops per watt is the lofty goal of the Power Efficiency Revolution for Embedded Computing Technologies (PERFECT) program of the U.S. Defense Advanced Research Projects Agency (DARPA) in Arlington, Va.

Beginning in 2012, the PERFECT program set out on an approach that includes near-threshold voltage operation and massive heterogeneous processing concurrency, combined with ways to use the resulting concurrency and tolerate the resulting increased rate of soft errors - all seeking to improve the power efficiency of embedded computing components.

"We could trade the thermals, but that's really solving the problem, because if you can reduce the overall power of the processing, you can make thermal management better," says Trung Tran, who manages the PERFECT program for the DARPA Microsystems Technology Office (MTO). "Thermals are something you have to look at, but PERFECT is looking reducing overall power, and then thermal management on the platform is less of a problem."

The PERFECT program was originally focused on seven program elements: architecture, concurrency, resilience, locality, algorithms, simulation, and test and verification.

As the program stands today, several contractors have been involved, and its benefits revolve around three technology areas: power design, reducing the voltage of electronic components, and advanced algorithms that use low power, Tran explains.

One of the most promising successes of the PERFECT program was at the University of California at Berkeley: the Strober tool, which enables processor manufacturers to gauge the power usage of their components in real time during the design phase. Strober "gives you within 3 percent of the power of the chip for workload, and that gives you a lot of control over the design," Tran says. "Now we can start doing power tradeoffs in real time, and make power an integral part of the design."

Separately, chip experts at IBM Corp. created a suite of design tools called CLEAR that help engineers ensure the reliability of processor designs that increase power efficiencies by using relatively low voltages.

"With CLEAR we can design-in reliability in the circuit so we could run at lower voltages and correct the errors of running at those lower voltages," Tran says. "Instead of going from a 1-volt power supply we can go all the way to 0.6 or 0.4 volts in the operation of the chip. We're looking at 60 percent of the voltage being gone and still have the same reliability and functionality. We get five orders of magnitude increase in the reliability of the chip."

Finally, the PERFECT program has led to efficiency increases in software algorithms that advanced processors run. "If we are more intelligent about how we structure the program, we can lower the power," Tran says. "We look at how we map information into memory to reduce the number of idle cycles."

With substantial research work already completed, Tran is working to switch lessons learned over to industry. Anyone interested in learning more or gaining access to the PERFECT research, should e-mail [email protected].