Combat robots

Unmanned vehicle technology developers focus on artificial intelligence, machine automation, and collaborative algorithms to make tomorrow’s robots smarter and more lethal than ever.

By J.R. Wilson

As recently as 30 years ago, the only combat robots were in science fiction movies and TV shows. That began to change in 1991 with the introduction of the U.S. military’s first — and, by today’s standards, primitive — unmanned aerial vehicle (UAV).

The DARPA Fast Lightweight Autonomy (FLA) competition seeks to provide increased safety and situational awareness to battlefield airmen.

The RQ-2A Pioneer’s success as an unarmed reconnaissance UAV marked the beginning of a new era in warfare that would make unmanned aircraft of all sizes and mission capabilities ubiquitous and essential in the second Gulf War a decade later. Operation Iraqi Freedom and Operation Enduring Freedom-Afghanistan also saw the introduction of the first ground robots, which U.S. warfighters used to scout caves and check under vehicles for explosives.

Virtually every nation on Earth now operates some form of UAV for military or law enforcement — with many also seeking to develop an indigenous production capability.

Gill Pratt, CEO and executive technical advisor for the Toyota Research Institute Inc. in Los Altos, Calif., and a former program manager at the U.S. Defense Advanced Research Projects Agency (DARPA) in Arlington, Va., headed that agency’s 2015 Robotics Challenge. He compares the current state of robotic development — including unmanned vehicles (UVs) — to the “Cambrian Explosion,” a period roughly 500 million years ago when the diversity and complexity of life on Earth increased at the most intense pace in the planet’s history.

Since the Pioneer UAV, the U.S. has progressed to fielding a wide range of unmanned aircraft, from small hand-launched, over-the-hill reconnaissance platforms to the massive U.S. Air Force RQ-4 Global Hawk and U.S. Navy MQ-4C Triton.

Unmanned aircraft deployment

The U.S. Army, with input from the Marine Corps, is working with DARPA and other U.S. Department of Defense (DOD), academic, and industrial labs to create viable unmanned ground vehicles (UGVs) — wheeled, tracked, and legged. The U.S. Navy, in addition to its unmanned combat air vehicle (UCAV) programs, is seeking to develop unmanned underwater (UUV) and unmanned surface vehicles (USVs) to increase the defensive shield around aircraft carriers, amphibious assault ships, submarines, and other combat vessels.

Other nations, especially Russia and China, also are devoting more money and research to future generations of advanced unmanned vehicles and the enabling technologies expected to provide a battlespace edge in an area the U.S. has dominated since 1991.

UVs also have begun to make their mark in civilian applications. UAVs have been proposed deliver pizzas. UGVs are proposed for driverless cars. Unmanned rotorcraft are flown by news organizations, law enforcement agencies, and farmers and ranchers, while public utilities agencies use them to survey power lines, oil and gas pipelines, wildlife populations, forest fires, and highway traffic. Terrorists and criminal organizations also are using UAVs.

As part of its initiative to certify a remotely piloted aircraft to fly in national airspace, General Atomics is creating a certifiable ground control station for MQ-9B pilots.

“We need unmanned systems that are much more survivable than existing platforms,” says Phil Finnigan, a UAV analyst for The Teal Group market analyst firm in Fairfax, Va. “The next generation needs to be stealthy and much more autonomous, so in the event communications are jammed, it can continue to carry out its mission and return. It needs to have more power, be faster, and be able evade enemy air defenses. Those are all key technologies that will be needed for the next generation.”

Other enabling technologies and capabilities required for those advanced UVs, Finnigan adds, are:

- unmanned logistics for delivering food, water, ammunition, and batteries to forward-deployed troops without risking lives in a traditional convoy;

- refueling, primarily aerial, such as the Navy’s airborne tanker program, but also for ground troops;

- swarming, distributing capabilities among several small, less expensive UAVs; and

- low-cost, disposable platforms for swarms.

Enabling technologies

“I think we will see more and more of that due to AI [artificial intelligence] advances on the commercial and civil side,” Finnigan says. “AI is a really key area. China is making a strong push in there, which is a serious concern. The U.S. is still in the lead, but China has made this a national priority, with large investments and a huge focus.

“Another revolutionary technology is low-cost HALE [high altitude, long endurance]. Some of the systems being developed for the civil and commercial world, primarily by Airbus and AeroVironment, for example, offer tremendous potential for long-term surveillance or communications at low cost,” Finnegan says. “That is being driving by commercial programs, but will have a lot of defense and homeland-security applications.”

A key enabler in the rapid evolution in UV numbers and capabilities to date has been the satellite-based global positioning system (GPS). Providing UAVs and precision-guided munitions with precise positioning, navigation, and timing (PNT) — against which enemy forces were helpless — has given the U.S. battlespace dominance since Operation Desert Storm. Ironically, as the likelihood of facing peer or near-peer forces in any future conflict grows, a key enabling technology for advanced future UVs will allow the same or better PNT in GPS-denied environments.

“GPS made a big difference and bootstrapped a lot of things, although we want to move beyond being reliant on it to other navigation and time systems that are less vulnerable,” notes Matt Whalley, focus area lead for autonomous and unmanned systems at the U.S. Army Aviation & Missile Research, Development & Engineering Center (AMRDEC) at Redstone Arsenal, Ala. “There is a lot of development in LIDAR [light detection and ranging} for terrain and environment sensing that started out as surveying equipment, but has been repackaged and miniaturized for terrain perception. Computational systems have gotten a lot smaller and higher power.

“We’re trying to move beyond the simple interoperability levels we have now, controlling the location of the UAV, to give it the ability to behave in a more intelligent way, which can be very simple or complicated, moving beyond two people controlling one aircraft,” Whalley continues. “In certain situations, UAVs have much longer endurance than manned aircraft. The promise is there, if and when we make them more intelligent, to give them teaming behaviors that are more flexible or adaptable than what human pilots can perform.”

Sensors, networking, and secure communications will be key to accomplishing that, along with understanding the certification and qualification processes required for those platforms, Whalley adds, noting that today “different pieces are at different levels, so we’re working toward trying to pull those together.” The ability to sense and act will be key.

“It all breaks down into perception and execution,” Whalley says. “I wouldn’t say more recent developments in AI have found their way onto these systems quite yet, but that is the desire and is being considered. We’re still working on how to integrate those kinds of things.”

Adding an advanced man/machine interface is imperative, regardless of the level of AI future systems may incorporate. “There is a whole line of research on how an operator can manage multiple UAVs, which rapidly convey situational awareness to the operator through proper displays of information, all part of developing trust in the system,” Whalley explains.

Revolutionary technology

Today’s state of the art (SOTA) comprises many technologies — evolutionary and revolutionary — that make the rapid rise of UAVs to date merely prologue to what is coming, in the commercial and military arenas.

“We are leveraging a lot of advancement in lightweight computing, MEMS [micro electro mechanical systems], high-resolution cameras, then developing a new class of lightweight algorithms that can run on computers not too different from what’s in a smartphone,” says Jean-Charles Ledé, program manager for two major DARPA UV programs. “We can do this at high speed, flying through the environment and looking at the video stream in real time. A lot of the systems we use were developed for the cell phone industry, then we add advanced algorithms in computer vision, mission planning, advanced flight controls.”

Ledé’s two programs are Fast Lightweight Autonomy (FLA) and Collaborative Operations in Denied Environment (CODE), each of which has potential applications for military and civilian UVs operating in all domains.

“FLA is about developing autonomous elements in complete RF blackout, no GPS and very little imagery, with all computation and sensing done onboard the platform. The aircraft is loaded with a mission plan, takes off by itself, and flies its mission. If it runs into a dead end, it will back out and find another way through the maze,” Ledé explains. “We mostly use a combination of inertial measurements and video cameras to maintain an understanding of its position and attitude, velocities, etc., integrating that over time to know where it is in the world. We use some laser range finder and sometimes LIDAR to look at the world to avoid obstacles.”

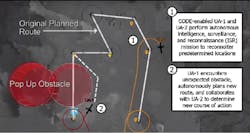

While FLA also can be used for civilian or military ground vehicles or even worn by an individual soldier to determine position and navigate even more precisely than GPS, CODE is more specifically military. Its objective is to develop algorithms to enable teams of unmanned platforms to work together. DARPA’s focus is on UAVs, but the fundamental concept is capable of doing multi-domain, with collaboration among seaborne, airborne, and ground UVs.

Algorithms for autonomy

“We’re really focusing on the algorithms for autonomy, not the platform. Small-footprint computers, embedded computers, and radios are critical parts. If you have that, I can put in the CODE software and make it a collaborative aircraft, enabling future behaviors, such as the ability to distribute tasks among UAVs, coordinate the task, and reorganize the team based on the mission. And do it in a denied environment, no longer having access to GPS and potentially RF challenged, if not denied,” Ledé continues.

Pictured is an artist’s concept of the CODE program’s focus on improving collaborative autonomy, or the capability of groups of unmanned aircraft systems (UAS) to work together under one person’s supervisory control.

“It also has to work in a target-rich environment, doing all that coordination at a very low bandwidth — no more than 50 kilobits per second. That is a key enabling technology — to look at the value of the information and only communicate what is important. Today’s UAVs will tell you their fuel levels every second, whether you need it or not, which you probably don’t. With CODE, the aircraft will only send information that is impactful and important to the rest of the team,” Ledé describes.

Also critical to the success of CODE is developing the interface to enable man/machine communication.

“Autonomy is not independence; you have to take orders as to the purpose of the mission, and the preference of the user. All that can be expressed in a mission plan. Once the UAV understands those mission objectives, it communicates its execution plan. So autonomy is the ability to choose a course of action that achieves the commander’s intent, not choose the mission. Once a course of action is chosen, it must be communicated back to the commander for validation,” Ledé says.

“CODE just recently entered phase three, where all the advanced algorithms selected during the first two phases are being implemented into the software and demonstrated in flight. The software can be hosted on any number of computers and operating systems, so platforms can talk to each other. In phase two, we also demonstrated the ability to use live aircraft and virtual aircraft, so multiple countries, manufacturers, domains — all are possible with modified algorithms. And finally, the ability to expand capabilities beyond what we can do today, enabling future engineers to use the open architecture to upgrade systems.”

The DARPA Collaborative Operations in Denied Environment (CODE) program conducted successful Phase 2 flight tests with teams led by Lockheed Martin Corp. in Orlando, Fla., and the Raytheon Co. in Tucson, Ariz.

New technologies for future UV operations are not limited to the platform, notes Chris Dusseault, senior director of international programs at UAV designer General Atomics Aeronautical Systems Inc. (GA-ASI) in Poway, Calif.

Centralized ground control

“When UAVs were first deployed, you put the GCS [ground control station] right next to the aircraft, which would take off, go out a couple hundred miles, then return and land at that airfield. Then they decided to put all the pilots in one location — Nellis Air Force Base in Las Vegas — but you still need a local operator to deal with take-off and landing,” Dusseault says.

“The SATCOM Launch & Recovery (Automatic Takeoff and Landing) system is trying to remove the need for the forward location and pilots, which would be a significant cost saving. You still need a forward maintainer to refuel and turn on the sat system as part of a preflight check. Then the system contacts the pilots in Vegas or elsewhere, who handle the takeoff, mission, and landing, then the forward maintainer takes care of the aircraft.”

Another major effort at General Atomics is certifiability to remotely piloted aircraft (RPA), introducing new capabilities, such as detect and avoid avionics, that will qualify future UAVs to be cleared by the FAA (and other global authorities) to fly in the national airspace (NAS), currently the domain of manned aviation. The company’s platform for this effort is the MQ-9B Protector, being built for the United Kingdom’s Royal Air Force. Although a military platform, the United Kingdom (U.K.) does not have the large military air zones the U.S. has for training flights and so must fly through civilian airspace.

Last summer, the General Atomics MQ-9B remotely piloted aircraft received FAA approval to fly in non-segregated airspace, representing another step toward eventual certification of an RPA to fly in national airspace.

“If you want to fly over a populated area, the FAA or CAA [the U.K.’s Civil Aviation Authority] will say it has to be designed to their standards, which requires significant redesign and improvements to the aircraft to give it the same airworthiness as manned aircraft. It has to be able to handle direct lightning strikes, harsh weather, and have all the design margins of FAA standards,” says General Atomics’s Dusseault.

“Once certified, the MQ-9B Protector will break the mold on what it takes to get an aircraft certified for NAS operations, which will have a significant impact on all future UAVs, including those for commercial applications. A large part of what we’re doing is applying known manned aviation standards to UAVs, but the development side is really the Due Regard Radar (DRR), which GA is building as a project that can go on any aircraft large enough to accommodate it,” Dusseault says.

The DRR comprises a two-panel active electronically scanned array (AESA) antenna and a radar electronics assembly to enable a UAV pilot to detect and track aircraft across the same field-of-view as a manned aircraft. AESA technology allows DRR to track several targets while simultaneously continuing to scan for new aircraft.

Deconflicting unmanned traffic

“We’re focused on deconflicting the low-altitude airspace and safe airspace integration, enabling beyond visual line-of-sight flying, using sensors that create an accurate 3D image of what’s out there. You also can use that data for security applications around critical infrastructure,” says Craig Marcinkowski, vice president of strategy and business development at Gryphon Sensors.

“Everything we do right now is basically ground sensors — radars, EO/IR cameras, and weather sensors — to get an airspace picture. That can be used in multiple models, using a common infrastructure. In an unmanned traffic management [UTM] system, we can interact with other sensors. We’re doing fixed infrastructure around test sites, for example, but we also have our Mobile Skylight System on a 35-foot telescoping mast, with multiple masts and different sensors in use at the same time,” Marcinkowski says.

Gryphon’s approach seeks to fill the gap from ground to “below the radar” levels — basically, 500 feet and below.

“In radars, we design sensors from the ground up for UAV traffic management — the low altitude, low-slow-small mission is a very difficult target for radar, which is designed to see larger craft at high altitudes. These are full 3D AESA systems, leveraging next-generation chip technology, including some of our own ASIC chips,” Marcinkowski explains.

“The FAA’s see-and-avoid requirement for pilots created a new problem for unmanned aircraft. We began using ground radar to detect non-cooperating items and show the FAA we can reliably detect those for unmanned aircraft. That low-altitude aircraft picture didn’t really exist until recently, but now that is an emerging and growing market. We’re focused on making the system smarter, with more automation.”

Ground robots

At the Army Tank Automotive Research, Development and Engineering Center (TARDEC) in Warren, Mich., Dr. Robert W. Sadowski, the Army’s chief roboticist, is focusing on Army-specific requirements and future UGVs designed to integrate smoothly into ground force operations and combat environments. That includes the Army’s watercraft mission autonomy and some work with transformable systems for riverine, but TARDEC’s predominant focus is on UGVs and small UAVs. That includes looking at landing small UAVs on moving ground platforms, a difficult task, but essential to the future use of short-range micro-UAVs.

UGV development has lagged behind UAVs, but the push is now on, especially in light of aggressive Russian and Chinese programs in the land domain.

“Good displays and information technology; a secure wireless network that can handle the EW [electronic warfare] and spectrum challenges of the future; reasonable SWaP [size, weight and power], high-performance sensors that are small, ruggedized, and don’t cost a lot; targeting systems; good (and cheap) IR — if you’re going to fight robots in the future, all those are going to be needed,” Sadowski says.

It is and will remain essential to have UV operators in the same battlespace as the platforms to decrease signal latency, which is an issue when trying to drive a platform on the ground at suitable speeds, Sadowski says.

“In some ways, it’s a tougher problem on the battlefield than NASA faces with its robots on Mars. The ground domain is really hard because it is so cluttered compared to the air and sea domains. We’re trying to get latencies under 100 milliseconds — closer to 50 — especially for high-speed, better-than-human speeds. We’ve done some long-distance testing over satellite, but it is a challenge,” Sadowski explains.

Gryphon Sensors in Syracuse, N.Y., also is working to enable UAV operations in the NAS, using off-platform technology to satisfy the FAA’s strict sense-and-avoid requirements.

Autonomy: How much is too much?

Any unmanned vehicle, to be fully operational in a complex and confusing battlefield environment, must have some degree of autonomy. One of the major debates among the military, commercial users, politicians, and technologists themselves is what level of artificial intelligence (AI) is required and acceptable in next- and future-generation UVs and other robotics. That is further complicated by the lack of a universally accepted definition of AI.

The development of “smart” systems — from military walking robots able to navigate rough terrain without human guidance to smartphone digital assistants — is on a continuum of machine learning, starting with adaptive (the ability to adapt to a new situation or information in real time) to cognitive (self-learning systems that use data mining, pattern recognition, and natural language processing to mimic the way the human brain works) to true AI (self-awareness and the desire and ability for self-improvement).

The goal of the DARPA Fast Lightweight Autonomy (FLA) program is to find ways to develop algorithms for minimalistic high-speed navigation in cluttered environments and fit through windows.

Although the U.S. has the lead in research and deployment of UVs in all domains, the realization that other nations are closing the gap rapidly has led to increased funding, new strategy documents, and the stand-up of new organizations. One of those is a TRADOC program office on Maneuver Robotics and Autonomous Systems (MRAS) under the Army Maneuver Center of Excellence (MCoE) at Fort Benning, Ga.

“The Russians have aggressively pursued this, as have the Chinese, so I’m very happy our MCoE understands the problem,” Sadowski says. “The U.S. is very good at aggregating technologies and figuring out the best way to fight and win with these systems. The question is, are we going to be playing catch-up or staying ahead?

“The MRAS office is in the early steps, using simulation exercises to help inform what they want, how to incorporate RAS [robotics and autonomous systems] within the infantry and armored brigade combat teams. They are staffing an initial capabilities document, probably to be released in the next couple of months,” Sadowski continues. “They will then have an operational view of what they think will be their needs across the spectrum, from logistics to leader-follower, engineer support, remoting lethality so the robot, not the soldier, is in harm’s way.”

Basically, all the military services are examining the proper employment of robotic systems, with security and cyber control, for each warfighting option, which Sadowski calls real progress.

“The MRAS strategy document was very high-level, while this gets down to the domain level, how to actually fight with robots, developing the right technologies to make these robotic systems members of the team,” Sadowski says. “We’ve been working that for a while, but this is a more formal process on how to survive on the very lethal battlefield of the future. This is robotics at a much higher, more intense level than what we used in Iraq and Afghanistan.

“Those concepts then will become part of the requirements for future UVs, incorporating real-time processing of video feeds to provide effective perception in a complex electromagnetic environment and synthesized so the commander can make decisions quickly,” Sadowski says. “The goal will be to do reasoning at the tactical level, making the mechanical platform part of the team.”

Human/machine interfaces

“There is a lot of work that needs to be done on human/machine interface, cognitive load — these are things under active pursuit in the labs now,” Sadowski continues. “The goal is how to do real-time updates, advanced situational awareness, solving the perception and prediction problems. Most of what we’re working on now is more deterministic systems. AI is less deterministic.

Military parachute rescue men practice a personnel recovery mission during the PJ Rodeo Competition near Patrick Air Force Base, Fla. DARPA’s Fast Lightweight Autonomy program is developing autonomous drone technology to give these highly trained specialists better situational awareness.

“Some amazing things are being done in that space. Start off with perception: Is that a car, a person, a bicycle? Then prediction, taking stuff between frames and stitch together a temporal message, which leads to planning,” Sadowski says. “Prediction has not yet been done by neural nets. With cameras all around my military vehicle, looking 360 degrees, you can train a system now to identify another vehicle that will be getting into your path, predict its path and location, and react accordingly. However, the contextual cues humans use on a daily basis are not yet there — identifying whether an object is someone holding a shovel or holding an AK-47.”

An autonomous system can pick out potential dangers constantly, passing that information back from a forward position to a squad.

“That is something we haven’t had before,” Sadowski points out. “We also need to give natural language speech to the robot that it can understand, respond to, and get back to me in natural language, not ones and zeroes. That heterogeneous teaming of man and machine in a tough environment is what we must have to make this relevant. Using robotics to create a protective bubble in the reconnaissance effort is where we’re really trying to go, to give smaller forces better situational awareness and do so affordably,” Sadowski says.

The multi-domain battle concept is being developed in concert with the Marine Corps. The Army has done a lot of automation of heavy ground logistics, especially leader-follower technology, and so doesn’t need 100 percent autonomy. That is now being taken to the next level, building two medium truck company sets, 30 trucks each, to be delivered in a year or so.

“The Marines are looking at delivering 3,000 pounds of cargo autonomously, ground-to-ground or sea-to-ground. The Army likes the idea, but isn’t investing in it,” Sadowski says. “There are some unique Marine characteristics, such as dealing with very heavy sand and surf, so they probably want something with tracks, where the Army is actively trying to develop small ‘mules’ that can go for 72 hours.”

COMPANY LIST

AeroVironment Inc.

Monrovia, Calif.

https://www.avinc.com

Airbus

Toulouse, France

http://www.airbus.com

Army Aviation & Missile Research, Development & Engineering Center (AMRDEC)

Redstone Arsenal, Ala.

www.army.mil/info/organization/unitsandcommands/commandstructure/amrdec

Army Tank Automotive Research, Development and Engineering Center (TARDEC)

Warren, Mich.

https://tardec.army.mil

U.S. Defense Advanced Research Projects Agency (DARPA)

Arlington, Va.

https://www.darpa.mil

General Atomics Aeronautical Systems Inc.

Poway, Calif.

http://www.ga-asi.com

Gryphon Sensors LLC

Syracuse, N.Y.

http://gryphonsensors.com

The Teal Group

Fairfax, Va.

http://www.tealgroup.com

Toyota Research Institute Inc.

Los Altos, Calif.

http://www.tri.global