Test-and-measurement tools struggle to keep up with shrinking chips

Test-equipment manufacturers are rising to the challenges presented by ever-smaller chips, while adopting new techniques to meet the Pentagon's demand that the tools be more generic and flexible.

By Ben Ames

Chips and buses are getting smaller and faster every day. For engineers trying to test military electronics, that means trouble. Test-and-measurement equipment is pushed to its limits trying to keep up with the latest, smallest circuits.

At the same time, Pentagon planners are trying to cut costs by buying generic, flexible test equipment instead of the 400 different types of military and aeronautic test equipment used today. Trying to meet both goals at once, test-equipment manufacturers are turning to techniques like the PXI form factor, and synthetic instrumentation.

Submicron circuit geometries

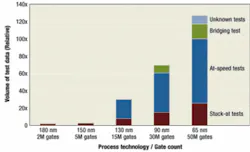

Finding chip defects gets more difficult as manufacturers of integrated circuits continue to shrink their products. Seeking greater computing density, they have shrunk their die sizes in recent years to 130 nanometers, 90 nanometers, and even 65 nanometers.

Today, engineers must run 20 or 30 times more tests to catch defects than with larger geometries, adding time and cost. At the same time, these complex chip designs push the gate-to-pin ratio even higher, pinching bandwidth for traditional scan test methods.

So manufacturers of test-and-measurement equipment are scrambling to build tools that can keep up.

One option is TestKompress from Mentor Graphics. It uses embedded deterministic test (EDT) and compression techniques to shrink the test data volume and test time, compared to state-of-the-art scan and automatic test pattern generation (ATPG) methods. The technique pairs on-chip hardware with software that generates compressed test patterns for deterministic ATPG.

"We're able to compress the operation because our hardware/software combination focuses on just the necessary bits," says Greg Aldrich, director of product marketing in the design for test division at Mentor Graphics, Wilsonville, Ore.

"We store a small test pattern, one-hundredth the size of a full pattern, and on-chip hardware generates the rest for stimulus and response patterns. Then it compresses the data again on the way out. And on hardware, the system compares that signal to the expected response."

For instance, in an at-speed test, a user looks for a slight variation in speed due to resistive wires, he says. The most common type of at-speed test is a transition pattern, which targets slow-to-rise or slow-to-fall signals.

Customers are seeing more resistive failures in these transition patterns in the latest, smallest, geometries, he says.

As they move from 180 to 130 nanometers, users see a 12-to-20-times increase in the number of at-speed failures because the chip size is so small that it causes three problems: the interconnect density gets too high; chips see materials failure; and misalignments become more frequent in stacks of 10 layers or more.

Engineers also run stuck-at tests, which determine whether any nodes are stuck at zero or one. Compared to at-speed tests, this pattern requires much more data coming into and out of the chip; bandwidth is more important than speed for greater accuracy.

Elsewhere on the device, shrinking die sizes also cause defects in embedded memory, which is usually the densest part of a chip, Aldrich says. These mistakes are hard to find with conventional BIST (built-in self-test) methods, so Mentor Graphics makes the MBIST

Architect product to test devices built on geometries below 90 nanometers.

PXI helps test equipment keep up

The rules of test-and-measurement technology have always demanded some tradeoffs. Users could choose either frequency or accuracy. And if they wanted power, the most accurate test computers took up serious space, stacking up an entire wall of instruments.

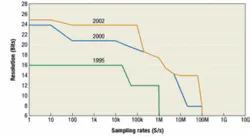

Now those rules are changing, says Greg Caesar, PXI product marketing manager for National Instruments in Austin, Texas. A nine-year graph of sampling rate versus resolution for National Instrument's test hardware shows that users today can have both at once — more-frequent samples and more-accurate resolution.

The company's NI PXI-5670 is a 2.7-GHz RF vector signal generator that offers 16-bit resolution at 100 megasamples per second, backed up by 256 megabytes of memory and 22 MHz of bandwidth.

As for size, new designs continue to shrink the equipment. Customers are choosing increasing numbers of PXI-based applications; PXI — short for PCI eXtensions for Instrumentation — is a modular, 3U form factor that is smaller and faster than its predecessor, PCI.

PXI is an open specification governed by the PXI Systems Alliance (see www.pxisa.org), defining a rugged, CompactPCI-based platform optimized for test, measurement, and control. PXI products remain compatible with the widely used CompactPCI industrial computer standard, but also have additional features like environmental specifications, standardized software, and built-in timing and synchronization.

For example, when engineers at the Lockheed Martin Aeronautics Co. in Fort Worth, Texas, had to perform wind-tunnel testing on the F-35 Joint Strike Fighter, they chose a data-acquisition tool with PXI instead of VME, doubling their channel density and performing the tests 80 times faster.

To wind-test an F-35 turbojet engine, they used the National Instruments LabVIEW Real-Time software with 16 PXI-based signal-acquisition modules from NI partner G Systems of Plano, Texas. Together, they ran 450,000 floating-point calculations every 50 milliseconds, while measuring air pressure across 128 channels at 20,000 samples per second.

In another application, engineers at ManTech International, of Fairfax, Va., recently designed a technology upgrade of test systems for Air Force LANTIRN (low-altitude navigation and targeting infrared for night) pods, used on the F-14, F-15, and F-16 fighter jets. Changing from VME to PXI-based computing, they were able to shrink the test equipment from seven to three racks, saving cost, maintenance, and deployment time.

Likewise, memory provider Conduant Corp. of Longmont, Colo., now offers PXI-bus data capture, supporting up to eight serial ATA disk drivers.

That means engineers performing test and measurement can better record data from their tools. They can record up to 200 megabytes/second of benchtop instrumentation data using hardware like Conduant's StreamStor PXI-808 real-time record and playback disk controller, and Big River DM4 1U rackmount chassis.

"Extending our product line to support PXI offers our customers a highly configurable and dependable solution with performance up to 200 megabytes/second, the fastest in the industry," says Tom Skrobacz, Conduant's vice president of business development. "That provides a cost-effective advantage to 32-bit users coupled with a significant performance increase."

Also moving from VXI to PCI or PXI is Symmetricom of San Jose, Calif. The company sells time and frequency references to manufacturers of automated test equipment (ATE). Precision timing is crucial for accurate testing. It lets engineers synchronize components in applications from radar and communications to telemetry, avionics, and flightline testing. In addition, it allows them to test each component's performance, like the gain of a certain amplifier, says John Hirsekorn, vice president of sales and marketing for the timing, test, and measurement division.

Symmetricom offers three types of timing systems, all of which produce precision timing data from the atomic oscillation of a specific element: cesium, rubidium, or quartz. Rubidium is the most popular now because it offers better long-term stability than quartz and costs less than cesium. That makes it a good fit for the test equipment used on an aircraft carrier's flight deck, since it starts up quickly (reaching specified performance within 30 minutes) and stays stable, he says. Their current rackmount versions run on VXI, but Symmetricom designers will produce a PCI version within a year, he says. That version will be faster, less expensive, and more flexible than its existing products.

Pentagon demands standard testing tools

Everyone wants to save money. That has been particularly true for users of test equipment since the release of a March 2003 report from the U.S. General Accounting Office (GAO), "DOD needs to better manage automatic-test-equipment modernization."

GAO investigators revealed that DOD technicians use more than 400 different types of test equipment to diagnose problems in avionics and weapons system components. DOD planners spent more than $50 billion to acquire and support that test equipment between 1980 and 1992, building a pool of equipment that was so specialized that each machine could test only one device.

Navy leaders alone spent $1.5 billion between 1990 and 2002 to acquire test equipment, and plan to spend by 2007 an additional $430 million on acquisition, $384 million on maintenance upgrades, and $384 million on new weapon-system testers, the report says.

In 1994, DOD planners decreed that future test equipment must use commercial off-the-shelf components and must not be specified to test only single platforms, but rather should interoperate with whole families of equipment.

Navy planners responded with CASS, the consolidated automated support system, which would replace 25 testers with one standard system, able to support 2,458 weapons components.

Budget cuts slowed the plan, and by its completion date of 2000, planners had completely replaced only four of the 25 testers, and partially replaced eight more. Today the completion date is 2008 at the soonest.

Another initiative is ARGCS, the agile rapid combat support system. This program's goal is to create common test software that all DOD service branches and American allies can use. For instance, the system must work on Navy and Marine F/A-18 and E-2 aircraft, Army Paladin self-propelled howitzers, and Army and Marine Avenger missile launchers, as well as platforms that the U.S. Air Force, as well as British and Spanish forces use.

It is scheduled for prototype development from 2004–2006, and testing and fielding from 2006–2008.

Manufacturers respond

Sparked by this report, leaders of the test industry saw changes last year in the defense sector as Pentagon planners scrambled to cut costs, says John Stratton, aerospace and defense solutions planner at Agilent in Loveland, Colo.

They face a challenge; if they replace obsolete test equipment, they must preserve the test program sets (TPS) used to requalify equipment. Yet the TPS will be useless unless the new test equipment is compatible with the old platforms.

One solution is to use synthetic instruments, as opposed to the standard rack solutions used today, he says. A synthetic instrument replaces a discrete test unit with software algorithms and hardware modules that typically are broken into three types: a signal conditioner (such as an up or down converter); a digitizer (such as a waveform generator); and a processor (running on a digital signal processor or on the software of a host computer). It is a similar approach to the technology behind software-defined radio, Stratton says.

This approach could deliver cost savings for the government, which could shop for competitive prices on modules from different suppliers. It also would deliver much more flexibility for the system integrators who assemble the components.

A synthetic instrument would be interoperable with all U.S. and NATO service branches, and also reduce the size and weight of test equipment, easing deployment to distant bases, he says. Finally, it would enable designers to develop test equipment before new weapon systems are completely built.

It could also be a solution to the Pentagon's long-term test-equipment obsolescence problem, he says. As a generation of VME-based VXI-format equipment seems slow and bulky by today's standards, many test-equipment manufacturers are building Compact PCI-based PXI-format equipment. Soon they will have to upgrade those machines to PCI Express, and then to yet another platform. Synthetic instruments could keep up with simple software upgrades.

Another approach to supporting older test systems is to shop in the used equipment market. Engineers at Agilent now support that route by certifying pre-owned test instruments, says Rice Williams, marketing manager in the financial solutions unit.

"When the economy took a nosedive, a tremendous amount of equipment came onto the used equipment market, from telcoms, downsizes, and failed dot-coms," he says.

In a new program, Agilent supports the 150 most popular models by certifying remanufactured equipment. Company technicians also will calibrate and provide warranties for used equipment, and offer software and firmware upgrades for older equipment.

The company even has an agreement with the online auction site eBay to provide assurance for used test equipment bought online. That's big business; engineers buy nearly $50 million of test equipment every year on the site, Williams says.

"If it's really old test equipment, eight or 10 years old, then the used equipment market is the only place to get it," he says. "The advantage is that Agilent will certify what a buyer might otherwise see as a risky proposition, buying a $15,000 spectrum analyzer from an unknown seller."

Prices have been rising slowly as some companies begin to recover from the recession, but Agilent planners say they believe the used equipment market will still be popular.

"We think there will be continued pressure on military budgets, especially with a $500 billion deficit," Williams says.

Flexible equipment tests many devices

"There's a graying of the line between commercial and military avionics, because military designers are increasingly using COTS gear," says Kevin Christian, customer services manager at Ballard Technology in Everett, Wash.

For example, the Airbus A380 passenger jet and the Boeing C-17 military cargo transport use the ARINC 429 databus, originally developed for strictly commercial applications.

The trend also runs the other way; many commercial aircraft use military technology for things like missile warning systems, using the MIL-STD 1553 databus.

For a technician running test-and-measurement machines, that signals a need for converters between the two standards. One solution is Ballard's new Omnibus card, offering modules that support both protocols.

The card relies on several digital signal processors to handle the data, and a PowerPC microprocessor to manage the system. The card includes 2 gigabytes of flash memory, and drivers to run its own software, Ballard's CoPilot 4.0.

"You don't need a host computer anymore; the card is handling it all — signal generating and processing," Christian says. Because the card can run independently of a host computer, it must handle its own communications, so it also features Ethernet portals and a specific IP address. That way, a user can network the card and use it remotely, from his office or laboratory.

New munitions need special tests

Another challenge for test-and-measurement tools is gaining enough flexibility to keep up with new devices.

"The services have always needed to test smart munitions, but now there's a need for systems to address a new class of miniature weapons, specifically the Small Diameter Bomb," says Terry Thames, Business Development Manager for ATK, in Clearwater, Fla.

That is a difficult demand because test tools must remain backward compatible with older weapons, while adding power and staying sealed against harsh environments.

Whether they are on an Air Force base or an aircraft carrier flight deck, technicians must test the capabilities of each weapon before they load it on a plane. That means they power-up each live weapon to ensure it has sound circuits and the latest software. They also test modular weapons parts like the Joint Direct Attack Munition (JDAM) strap-on tail kit to verify the part works before taking time to assemble an entire smart bomb.

One tool for the job is ATK's CMBRE, the Common Munitions BIT/Reprogramming Equipment, a rugged, portable computer for testing smart weapons. It has been available for several years, but now faces an upgrade to handle the new platforms.

Federal planners are still choosing a common standard for the miniature smart weapon protocol, so test-machine manufacturers can't launch final products for two to four years.

In the meantime, they know it must have certain strengths, Thames says. "We'll need more software, faster data-transfer rates, and new communications protocols." That is because new munitions use new databuses, like the EBR (Enhanced Bit Rate) 1553 used in the Small Diameter Bomb.

For the next version, ATK engineers will design CMBRE with write-protected memory to block viruses and boost security, and make it compatible with Windows XP as well as the standard DOS operating system.

In the long run, it will be flexible enough to work with various weapons, because users can simply insert PCMCIA cards with weapon-specific software, provided by each weapon's prime contractor. Those cards also hold the majority of memory, and that makes CMBRE simple to declassify, since it will not store any classified data on board.

One challenge they still face is paring enough weight to make it man-portable while still preserving its environmental shielding — particularly from the enormous EMI (electromagnetic interference) on an aircraft carrier deck. Today the computer itself is 14 pounds, but the full system weighs 42 pounds, including cables.

The COTS-based, PC-104 format computer will have limited processing speed because designers cannot use cooling fans without compromising environmental shielding, and they cannot use variable-speed processor chips without falling out of spec. So they will probably use a lower-speed Intel Pentium chip, of at least 400 MHz, Thames says.